The NIH has called for comments by April 24 on a proposed policy that would streamline peer review of grant proposals. Here’s a graphic of the proposed changes:

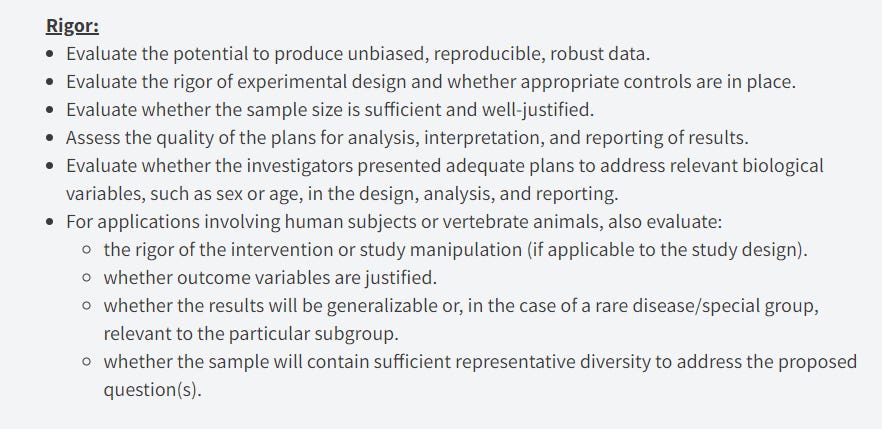

The Good Science Project applauds the NIH’s efforts in this regard! Indeed, given the results of efforts like the Reproducibility Project in Cancer Biology, the new focus on “rigor” as a central issue is especially important:

***

That said, there are some lingering issues with grant peer review that aren’t new at all.

In 1975, the NIH launched a major “Grants Peer Review Study Team” that produced a number of reports and recommendations (much thanks to Bhaven Sampat at Columbia for sending me the documents in question!).

A 1978 report from that team tried to address many of the comments raised by the scientific community about NIH’s grant peer review. Despite the passage of some 45 years, the issues might feel . . . very familiar:

First, some commenters felt that peer review was stacking the deck against outsiders:

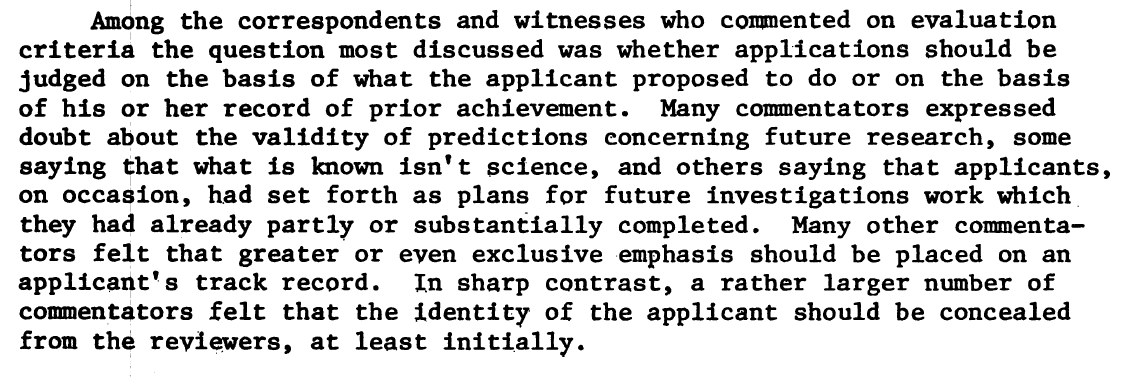

Second, some commenters thought that if NIH awards grants based on predictions as to what you will do in the next several years, it either “isn’t science” or is based on work that was “already partly or substantially completed”:

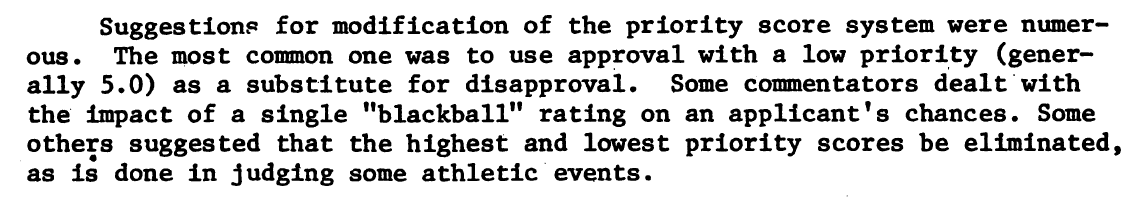

Third, some commenters pointed out that if even one reviewer gives a low rating to a proposal, that would harm the chances of funding:

Fourth, “almost 90 percent” of commenters said that NIH was “unreceptive to new ideas,” and that the trend was in the wrong direction because in a time of funding shortages, peer reviewers were “less willing to take risks”:

Fifth, comments said that interdisciplinary research was particularly likely to be reviewed unfavorably:

If you talk to practicing scientists or to NIH leaders speaking off the record, you’ll obviously hear many of these same themes . . . 45 years later.

The NIH’s plan to streamline peer review is a great step in the right direction. But there are other ideas it should consider as well.

For example, the National Science Foundation recently announced that it “is considering piloting a funding model under which grant peer reviewers are given ‘golden tickets’ — a veto that they can deploy just once in a funding cycle to override the decisions of their colleagues.”

What good would this do? Well, the theory is that in a competitive environment, proposals veer towards “safe” ideas that are more likely to get universal approval. A golden ticket model might “mean that unorthodox, high-risk proposals that don’t necessarily have the unanimous backing of all referees end up being funded.”

It remains to be seen, of course, how the NSF will pilot such a model, and what the results will be. But we need to develop more ideas about how to address problems with grant peer review that have been identified since the mid-1970s (if not before).